Introduction

AI chatbots have become the frontline of customer service operations across contact centers, BPOs, telecom providers, banking institutions, and eCommerce platforms. 85% of customer service leaders will explore or pilot a customer-facing conversational generative AI solution in 2025, yet chatbot accuracy has emerged as a critical operational concern.

The expectation gap is stark: customers demand instant, correct answers, but 64% would prefer that companies didn't use AI in their customer service, with 53% considering switching to a competitor over AI deployment concerns. That dissatisfaction rarely traces back to the AI model itself — it traces back to the knowledge layer powering it.

The evidence is concrete: 61% of customer service leaders report a backlog of knowledge articles that need editing, and more than one-third have no formal process for revising outdated content. This breakdown in knowledge management directly undermines chatbot performance — producing confident but wrong answers, unnecessary escalations, and degraded customer experience.

This guide breaks down how a chatbot actually uses its knowledge base to answer questions — as a defined, manageable process that organizations can control and continuously improve.

TL;DR

- A chatbot's knowledge base is a curated internal repository of FAQs, policies, SOPs, and product details that grounds every response in verified company information

- Chatbots process incoming queries, search the knowledge base semantically, and generate responses grounded in retrieved content

- Knowledge base quality, structure, and freshness determine how accurate chatbot responses actually are

- Outdated or poorly structured knowledge causes wrong answers and erodes customer trust

- Platforms like Knowmax manage the knowledge layer that feeds chatbots, keeping content structured and current so customers get accurate answers

What Is a Chatbot Knowledge Base?

A chatbot knowledge base is a structured, internal repository of an organisation's institutional knowledge—including FAQs, troubleshooting guides, product information, policies, SOPs, and customer interaction data—that the chatbot queries at runtime to generate answers.

A knowledge base is not the AI model's general training data, which is broad, often outdated, and lacks company-specific context. AWS defines it as a mechanism to "connect foundation models to your company data sources for retrieval augmented generation (RAG), to extend the power of foundation models" beyond their pre-trained knowledge.

That gap has real consequences. Without a knowledge base, chatbots rely on generalised model outputs that may hallucinate or miss critical company context. 39% of customer service chatbots required rework due to hallucination-related failures, and AI hallucinations cost businesses an estimated $67.4 billion globally in 2024.

Static vs. Dynamic Knowledge Base Integration

Two primary integration approaches exist:

- Static integration: Content is pre-trained or embedded during bot setup, creating a fixed knowledge snapshot

- Dynamic/RAG-style integration: Content is retrieved on demand at query time using real-time semantic search

Modern enterprise deployments favour dynamic integration. AWS Bedrock Knowledge Bases implements a two-phase system: a preprocessing stage where data is chunked, embedded, and indexed, followed by runtime execution where queries are converted to embeddings and matched against the vector database. The retrieved content is then augmented into the LLM context — ensuring chatbots access current information without retraining the model.

How Does a Chatbot Use Its Knowledge Base to Answer Questions?

When a customer sends a message, the chatbot executes a defined sequence—each step contributing to whether the final answer is accurate, relevant, and useful.

Query Reception and Language Processing

The process begins when a customer submits a message in natural language. This arrives as raw, unstructured text with no inherent label or category.

The chatbot's NLP (Natural Language Processing) layer processes this input by:

- Tokenising the message into analysable components

- Extracting entities such as product names, account references, or issue types

- Identifying intent—what the customer actually wants to accomplish, not just the words used

- Detecting sentiment or urgency signals that may affect routing

Microsoft Copilot Studio supports three NLU tiers, including advanced multi-intent recognition and silent entity extraction, demonstrating how sophisticated intent recognition has become.

Weak intent recognition is the first failure point. A bot that misreads intent will search the knowledge base for the wrong thing and return an irrelevant answer—regardless of how good the knowledge base content is.

Knowledge Base Search and Retrieval

Once intent is identified, the chatbot converts the processed query into a vector embedding—a numerical representation that captures semantic meaning. Similar questions receive similar vector representations even when phrasing differs.

This vector is compared against indexed embeddings of knowledge base content stored in a vector database. AWS Bedrock uses models like Amazon Titan Text Embeddings V2 (1024 dimensions) to encode semantic meaning, enabling similarity-based retrieval using distance metrics including cosine similarity and dot product.

The system identifies chunks of content with the closest semantic match—not just keyword overlap. This is where RAG (Retrieval-Augmented Generation) operates: the most relevant knowledge base chunks are retrieved and passed alongside the user's query to the language model, grounding the response in actual company data rather than model inference.

The choice of vector database determines retrieval speed and scale. Enterprise implementations commonly run on:

- Amazon OpenSearch Serverless

- MongoDB Atlas

- Pinecone

- Redis Enterprise Cloud

Relevance Scoring and Confidence Evaluation

The system evaluates retrieval quality through relevance scores and confidence thresholds. How a chatbot handles low-confidence matches determines whether it builds or destroys customer trust.

Three-tier escalation logic:

- High confidence → Respond directly with retrieved information

- Medium confidence → Respond but flag for human review

- Low confidence → Don't guess; escalate to a live agent with full conversation context

Leading implementations aim for human escalation rates below 15%, with systems like CarShield's AI Agent containing 66% of calls without human intervention.

Answering confidently with wrong information causes more customer damage than saying "I don't know." RAG reduces hallucination rates by up to 71% compared to ungrounded generation, but this only holds when confidence thresholds are properly calibrated.

Response Generation and Delivery

The language model takes the customer's query, retrieved knowledge base content, conversation history, and system-level instructions (tone, permitted scope, and brand voice guidelines) to generate a natural language response grounded in retrieved facts.

Grounding anchors the response to retrieved knowledge base content rather than the model's broad training data—this is what prevents hallucination in enterprise deployments. AWS Bedrock's RAG workflow uses 512-token chunks with 20% overlap to preserve context between adjacent segments, ensuring each response draws from complete, contextually accurate information.

The response is delivered to the customer in the appropriate channel—chat widget, WhatsApp, email, or IVR—with scope enforcement ensuring the bot stays within its defined domain.

What Goes Into an Effective Chatbot Knowledge Base?

A chatbot can only be as accurate as the knowledge it draws from. Get the structure wrong, and even a well-trained model returns outdated policies, contradictory guidance, or nothing at all.

Essential Content Types

An effective knowledge base must include:

- FAQs addressing common customer questions

- Policy documents covering returns, warranties, compliance

- Troubleshooting procedures for technical issues

- Product specifications and feature details

- Decision trees for complex, multi-step resolutions

- Escalation pathways defining when and how to route to humans

Content Structure: Chunking, Tagging, and Categorisation

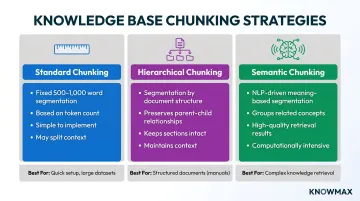

Well-structured content retrieves more accurately than large, unformatted documents. Three primary chunking strategies exist:

| Strategy | Method | Best For |

|---|---|---|

| Standard chunking | Fixed word/token counts (500-1,000 words) | Simple, consistent retrieval |

| Hierarchical chunking | Segmented by document structure (sections/subsections) | Preserving relationships |

| Semantic chunking | Segmented by meaning/topic using NLP | Most relevant retrieval results |

Essential metadata includes:

- Descriptive tags (keywords, issue categories)

- Status indicators (draft/published/archived)

- Temporal data (creation and update timestamps)

- Performance metrics (relevance scores, usage data)

- Unique Source IDs for traceability and version control

A SaaS company implementing structured semantic chunking and metadata saw a nearly 30% drop in average support response time.

Content Freshness: The Critical Factor

Stale knowledge bases are a primary driver of chatbot inaccuracy. When policies change, products update, or new issues emerge, the knowledge base must reflect those changes immediately.

A single outdated article in an AI-driven environment can influence thousands of conversations within hours. AI cannot apply judgement to recognise outdated or conflicting guidance; it retrieves and scales whatever it finds. If two contradictory troubleshooting articles exist, the chatbot may generate a response that reflects neither correctly.

Content governance requirements:

- Regular content audits (monthly minimum)

- Gap analysis from unanswered query logs

- Structured workflow for updating and validating content

- Version control ensuring all users see the most current content

- Real-time synchronisation across all channels

AI-Powered Knowledge Management Platforms

Meeting those governance requirements manually at scale is difficult. Platforms like Knowmax are built to handle exactly that — enabling teams to create, organise, update, and deliver structured knowledge across channels. Key capabilities include:

- Rephrases, summarises, and auto-translates content across 25+ languages using AI authoring tools

- Walks customers through branching resolution paths via interactive decision trees

- Feeds accurate, intent-mapped content into chatbots through CRM, helpdesk, and messaging integrations

- Identifies content gaps automatically from unanswered query logs

- Keeps only validated, approved content in circulation through authorisation workflows

By integrating with platforms like Salesforce, Zendesk, Genesys, Freshchat, and Talkdesk, knowledge management systems ensure chatbots have access to current, structured information at query time.

When Knowledge Base Quality Breaks Down

Common failure modes include:

Outdated content that generates confident but wrong answers

- Chatbots retrieve information that was accurate six months ago but no longer reflects current policy

- Customers receive incorrect return windows, pricing, or product specifications

- Trust in self-service channels erodes rapidly

Content gaps causing unnecessary escalations

- Queries about new products, seasonal policies, or emerging issues find no relevant knowledge base content

- The chatbot escalates unnecessarily, increasing agent workload and customer wait times

- Poorly maintained knowledge bases prevent chatbots from scaling effectively

Poorly structured documents confusing retrieval

- Large, unformatted documents split incorrectly during chunking

- Context is lost between chunks, leading to incomplete or misleading answers

- Semantic search fails when content lacks proper metadata and tagging

Inconsistent information across departments

- Different teams maintain separate versions of policies or procedures

- The chatbot retrieves conflicting answers depending on which content chunk matches the query

- Customers receive different answers to the same question on different days

Cascading Impact on Customer Experience Metrics

Knowledge base failures directly impact operational metrics:

- AHT climbs as agents spend time correcting chatbot mistakes and rebuilding customer trust

- FCR rates fall when incorrect chatbot responses send customers back to support a second or third time

- CSAT scores drop as customers lose confidence in self-service that gives them wrong answers

Comm100's 2026 benchmark data shows chatbot-specific satisfaction at only 49.3%, compared to 82% for human agents. However, bot-to-agent handoff satisfaction reached 92.6%—more than 10 points above overall CSAT—indicating that well-designed hybrid workflows outperform either channel alone.

McKinsey research puts the upside of AI-powered customer experience at 15-20% CSAT improvement, 5-8% revenue growth, and 20-30% cost-to-serve reduction. The condition: "properly-calibrated AI models accessing integrated data sets." Without that foundation, the gains don't materialise.

The Fix: Better Knowledge Management, Not Better AI

The data points in one direction: the bottleneck is rarely the AI model. It's the knowledge behind it. Fixing that requires process, not a model upgrade:

- Regular content audits reviewing accuracy and completeness

- Gap analysis from unanswered query logs identifying missing content

- Structured workflows for updating and validating knowledge before publication

- Performance monitoring tracking which articles drive successful resolutions vs. escalations

- Governance standards ensuring consistency across departments and channels

Conclusion

Chatbot accuracy is not primarily an AI problem — it is a knowledge management problem. The bot is only as reliable as the internal knowledge base it draws from.

Organisations that invest in structured, maintained, omnichannel knowledge management create a compound advantage: more accurate chatbots, faster agent resolution, lower training costs, and more consistent customer experiences across every touchpoint.

The AI model is the engine, but the knowledge base is the fuel. Without clean, current, well-structured content behind it, even the most sophisticated chatbot will stall on questions that a well-maintained knowledge base could answer in seconds.

Frequently Asked Questions

What is a chatbot knowledge base?

A chatbot knowledge base is a curated, internal repository of company-specific information (policies, FAQs, SOPs, product details) that the chatbot retrieves answers from at query time. It's distinct from the AI model's general training data, providing verified, organization-controlled facts rather than broad internet knowledge.

How does a chatbot find the right answer from a large knowledge base?

The chatbot converts the customer's query into a vector embedding that captures semantic meaning. This vector is matched against indexed knowledge base content to find the closest meaning match—not just keyword overlap—through retrieval-augmented generation (RAG), which grounds responses in actual company data.

What happens when a chatbot cannot find an answer in the knowledge base?

Well-designed systems detect low-confidence retrieval through relevance scoring and escalate the conversation to a human agent with full context. This prevents the chatbot from guessing and risking a wrong answer, maintaining customer trust while ensuring resolution.

How often should a chatbot knowledge base be updated?

Update the knowledge base whenever products, policies, or procedures change. Reviewing unanswered query logs monthly helps identify content gaps before they affect customer experience.

What types of content should be included in a chatbot's internal knowledge base?

Core content includes FAQs, troubleshooting guides, product and pricing details, policy documents, decision trees, and escalation procedures. Structure, tag, and validate everything regularly to keep chatbot responses accurate and reliable.

How is a chatbot knowledge base different from a general AI model?

A general AI model generates responses based on broad internet training data and can hallucinate plausible-sounding fiction. A knowledge base-connected chatbot retrieves answers from the organization's own verified content, giving it accuracy and auditability that generic AI simply cannot match.