Introduction

Picture a typical contact center: agents toggling between four or more systems, typing keywords into a static knowledge base, scanning through dozens of irrelevant articles while a customer waits on hold. Contact center agents navigate at least 4 different systems during their workday, and they spend 25% of their time searching for information across these fragmented platforms. The result? Inconsistent answers, frustrated customers, and average handle times that refuse to budge.

Generative AI is changing that. Where traditional knowledge bases force agents to hunt and interpret, generative AI knowledge systems understand intent, retrieve relevant content, and synthesize precise answers in real time — replacing the way support knowledge is created, accessed, and delivered.

TLDR

- Generative AI knowledge bases use LLMs and RAG to understand intent and deliver precise answers across every support channel

- Unlike keyword search, it interprets what users actually need — even when phrasing doesn't match stored content

- Key outcomes: 20–35% AHT reduction, higher FCR, faster onboarding, and consistent omnichannel delivery

- Top platforms pair semantic search and AI authoring with deep CRM/CCaaS integrations to eliminate knowledge silos

What Is a Generative AI Knowledge Base?

A generative AI knowledge base is a dynamic knowledge system powered by large language models that understands natural language queries, retrieves relevant content from structured and unstructured sources, and generates accurate, context-aware responses—rather than simply returning a list of articles.

How It Differs from Traditional Knowledge Bases

Traditional knowledge bases are static repositories that rely on keyword matching. An agent searches for "password reset authentication," and the system returns every article containing those exact terms—often dozens of irrelevant results that force agents to scan and interpret during live calls.

Generative AI knowledge bases use semantic understanding. They interpret what the user actually needs, even if the phrasing differs completely from stored content. That shift—from matching words to understanding intent—is what makes them meaningfully different.

The Role of Retrieval-Augmented Generation

RAG is the technical foundation that makes generative AI knowledge bases reliable for enterprise support. The system first retrieves the most relevant content from your organisation's knowledge sources, then uses a language model to synthesise a response grounded in that specific content—not in general internet training data.

This two-step process is what prevents hallucination, the AI equivalent of fabricating answers. A peer-reviewed study found that standard LLM chatbots hallucinated approximately 40% of the time, while RAG-based systems using curated sources reduced that rate to 0–6%.

For support teams, that distinction is critical. Answers stay on-brand, accurate, and compliant with company policies because the AI only works with verified internal content.

Content Types and Capabilities

Generative AI knowledge bases ingest diverse content types:

- FAQs and help articles

- Product manuals and technical documentation

- Policy documents and compliance guidelines

- Troubleshooting guides and SOPs

- Past resolved tickets and case histories

- Both structured databases and unstructured documents

The "generative" capability means the system doesn't just fetch a document—it composes tailored responses, summarises long documents, adapts tone for the audience, and translates answers into multiple languages. Some platforms extend this further with AI authoring tools that rephrase, summarise, and auto-translate content into 25+ languages—giving global support teams a consistent knowledge foundation regardless of where they operate.

Why Traditional Knowledge Bases Fall Short in Modern Support

The Search Problem: Long Lists and Wasted Time

Agents using traditional knowledge bases get long, irrelevant article lists in response to searches. This forces them to sift through content during live calls—directly increasing average handle time. McKinsey found that 30-40% of claim-related call time is "silent" because agents are searching for information.

When every search requires manual filtering, even experienced agents waste interaction time hunting instead of helping.

Knowledge Fragmentation Across Systems

Support content lives across multiple systems—CRMs, intranets, shared drives, ticketing platforms—with no unified layer to connect them. 54% of organisations use more than 5 different platforms for documenting and sharing information.

Agents toggle between tools, and customers receive inconsistent answers depending on who picks up. That inconsistency shows up in the numbers: only 33% of customer service managers believe their agents can easily find the information needed to assist customers.

Content Decay and Staleness

Knowledge bases grow stale quickly as products update, policies change, and new issues emerge. Traditional systems have no built-in mechanism to flag outdated articles or suggest updates. 62% of customer service agents report that materials such as help pages are not up-to-date.

Agents and bots working from incorrect information create customer frustration, compliance risks, and unnecessary escalations.

The Onboarding Burden

New agents must spend weeks learning where information lives and how to search for it effectively. New contact center agents can take up to 3 months to reach full productivity, and 46% of managers agree it takes too long to onboard new employees with needed knowledge.

That ramp-up carries a real price tag. Onboarding a single agent can cost anywhere from a few thousand dollars to well over $10,000. With contact center turnover running at 30–40% annually, those costs compound fast.

Scale Breaks the System

What works for a 20-agent team breaks down in a 500-agent contact center or when support spans multiple channels—chat, voice, email, social. 58% of agents at underperforming organisations must toggle between multiple screens to find what they need—a friction point that multiplies with every channel added.

How a Generative AI Knowledge Base Works in a Support Context

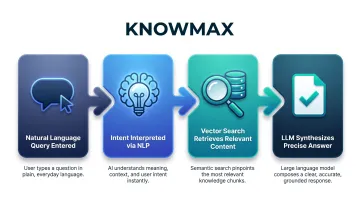

The End-to-End Flow

Here's what happens when a customer or agent poses a question:

- Natural language query is entered — "customer can't log in after password reset"

- System interprets intent using NLP — understands this as an authentication troubleshooting issue

- Vector search retrieves relevant content chunks — pulls step-by-step guides from the knowledge repository

- LLM synthesizes a precise answer — generates a human-readable response grounded in that specific content

This entire process happens in seconds, delivering agents what they need without manual searching.

Intent-Based Search vs. Keyword Search

Keyword search looks for exact term matches. If an agent types "customer can't log in after password reset," the system searches for articles containing those specific words—and often misses the best answer if it's phrased differently.

Intent-based search understands this as an authentication issue and surfaces step-by-step troubleshooting guides—even if those articles never contain that exact phrasing. Industry consultants recommend hybrid search combining both keyword precision and semantic understanding for stronger search accuracy.

This matters because agents ask questions conversationally, and customers describe issues in endless variations. Intent-based systems handle this variability without requiring agents to guess the "right" keywords.

AI Authoring That Keeps Knowledge Current

Generative AI doesn't just deliver knowledge—it helps create and maintain it:

- Content gap detection — AI tools analyse unresolved queries and suggest missing articles based on patterns

- Automatic rephrasing — Dense SOPs are rephrased into clear agent-facing instructions

- Summarisation — Lengthy documents are condensed into scannable key points

- Freshness flags — Articles that haven't been reviewed recently are flagged for updates

These capabilities ensure the knowledge base evolves with the business, reducing the editorial burden on knowledge managers.

Guided Resolution Flows

Keeping knowledge current is only half the equation. In a support context, generative AI also drives structured, step-by-step resolution paths rather than just answering one-off questions.

Platforms like Knowmax combine generative AI knowledge delivery with interactive decision trees that guide agents through complex issues in real time. The AI auto-traverses steps based on CRM data or customer responses, keeping agents on the correct resolution path consistently. This reduces escalations, prevents errors, and standardises responses across teams — particularly valuable for compliance-sensitive industries.

Key Benefits for Customer Support Operations

Reduced Average Handle Time and Faster Resolutions

When agents get precise, synthesized answers instantly rather than scanning multiple articles, they resolve queries faster. AI-assisted agents see a 20-35% decrease in AHT compared to unassisted interactions.

A landmark study of approximately 5,000 agents at a Fortune 500 company found that customer support agents using a generative AI tool saw a 13.8% increase in issues resolved per hour. For novice agents, the improvement was even more dramatic—35% productivity gains.

For high-volume contact centers, the numbers add up fast. With the median cost per human-assisted contact at $13.50, a 20% AHT reduction across thousands of daily interactions produces significant savings without headcount changes.

Improved First Call Resolution and Consistency

When every agent—regardless of experience level—has access to the same accurate, up-to-date answer, response quality becomes consistent across channels and shifts. This directly improves FCR rates and CSAT scores.

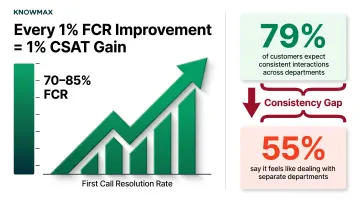

Industry FCR benchmarks range from 70-85%, with SQM Group reporting that for every 1% improvement in FCR, there is a corresponding 1% improvement in CSAT.

That FCR gain depends heavily on answer consistency—which most organizations still struggle with. 79% of customers expect consistent interactions across departments, yet 55% say it generally feels like they're communicating with separate departments. A generative AI knowledge base closes that gap by surfacing the same verified answer across every channel and shift.

Faster Agent Onboarding and Reduced Training Costs

New agents can rely on the knowledge base as an always-available expert guide, compressing ramp-up time. The NBER study found that agents with 2 months of AI-assisted tenure performed comparably to agents with 6 months of unassisted tenure.

Knowmax customers have reported measurable gains across the onboarding process:

- 40% reduction in onboarding time through centralized knowledge and interactive training modules

- Real-time content updates that keep new hires aligned with the latest protocols from day one

- Accelerated time-to-competency, with junior agents reaching senior-level performance benchmarks significantly faster

Given that replacing a single agent can cost upwards of $10,000, getting new hires productive faster is one of the highest-leverage investments a contact center can make.

Real-World Applications in Customer Support

Agent Assist During Live Interactions

In voice or chat support, the generative AI knowledge base surfaces the right answer in real time as the agent types or the customer speaks. It acts as a co-pilot that prevents hold times and reduces after-call work.

For example, when integrated with platforms like Salesforce or Genesys, agents can search and share knowledge without leaving their workflow—eliminating screen toggling and context-switching that slows resolution.

AI-Powered Self-Service and Chatbots

Generative AI knowledge bases power customer-facing bots that go beyond scripted FAQs. These bots understand complex questions and generate accurate, conversational responses grounded in verified company knowledge rather than open web data.

One Knowmax customer handled over 3.7 million chatbot transactions while improving knowledge access for over 120 agents, achieving a measurable drop in AHT and improved CSAT scores.

Because the AI retrieves answers from the same knowledge base agents use, customers receive consistent information whether they self-serve or escalate to a human.

Omnichannel Knowledge Consistency

That consistency doesn't stop at self-service. The same generative AI knowledge base serves chat, email, voice, and social channels—so a customer gets the same answer whether they reach out via WhatsApp or phone.

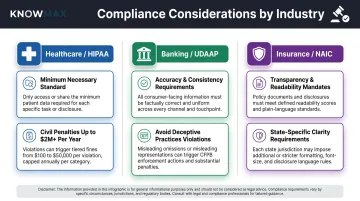

This consistency is essential for brand trust and compliance-sensitive industries. For example:

- Healthcare (HIPAA) : The Privacy Rule's Minimum Necessary Standard requires that disclosures be limited to the absolute minimum required. Civil penalties for non-compliance range from $34,464 to $2,067,813 per year

- Banking (UDAAP) : Financial institutions must ensure customer-facing information is accurate and consistent to avoid deceptive practices violations

- Insurance (NAIC) : Transparency and Readability requirements mandate that consumers receive enough information to compare policies, with state-specific requirements for clarity and consistency

Generative AI knowledge bases ensure every channel delivers compliant, consistent answers—reducing regulatory risk and building customer trust.

How to Choose the Right Generative AI Knowledge Base Platform

Intent-Based, Semantic Search (Not Just Keywords)

The platform must understand what users are asking—not just match terms—to surface accurate results for complex, real-world support queries. When evaluating options, confirm the system supports:

- Vector search that maps meaning, not just word frequency

- Intent recognition that handles ambiguous or multi-part queries

- Contextual disambiguation so agents get the right answer even when phrasing varies

AI Authoring and Content Management Capabilities

The platform should include tools that help teams create, update, summarize, and translate knowledge content efficiently—reducing the editorial burden on knowledge managers.

Platforms like Knowmax offer AI author tools capable of:

- Rephrasing dense content for clarity

- Summarizing lengthy articles into digestible formats

- Auto-translating content into 25+ languages for global support teams

- Detecting content gaps based on unresolved queries

That last point matters more than it sounds — content gaps identified from real query failures are far more actionable than periodic manual audits.

Of course, even well-authored content fails agents if it lives in a separate tab they have to hunt for. That's where integration becomes the deciding factor.

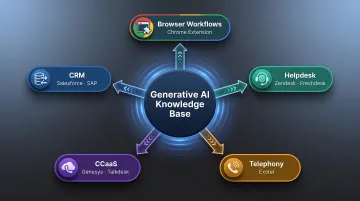

Deep Integration with Existing Support Infrastructure

A generative AI knowledge base that doesn't connect with the CRM, helpdesk, or telephony platform creates another data silo. Look for native integrations with platforms like:

- CRM: Salesforce, SAP

- Helpdesk: Zendesk, Freshdesk

- CCaaS: Genesys, Talkdesk

- Telephony: Exotel

- Browser-based workflows: Chrome extensions for accessing knowledge across any platform

These integrations ensure knowledge flows where agents already work, eliminating screen toggling and keeping agents focused on the customer rather than the interface.

Frequently Asked Questions

What is a knowledge base in generative AI?

A knowledge base in generative AI is a repository of organizational content that an AI system (powered by LLMs and RAG) uses to retrieve and generate accurate, grounded answers to user queries—rather than relying on pre-trained internet data alone.

What is an example of a generative AI knowledge base?

A contact center platform where an agent types a customer issue in natural language and the system instantly generates a suggested resolution by pulling from internal SOPs, policy documents, and product guides. Agents get precise answers without manually searching through articles.

How can AI be used to build a knowledge base?

AI can auto-generate articles from resolved ticket data, suggest content gaps from unanswered queries, summarize long documents into agent-ready guides, and flag outdated content for review—cutting the manual effort of content creation and upkeep.

What are the basics of generative AI?

Generative AI refers to AI models (like LLMs) that can produce new content—text, answers, summaries—by learning patterns from large datasets, enabling them to respond to novel queries in natural language rather than following fixed rules.

What is the difference between assistive AI and generative AI?

Assistive AI helps users complete specific tasks using predefined rules or recommendations (for example, suggesting the next action). Generative AI, by contrast, creates new content or answers from scratch by understanding context—making it far more flexible for dynamic knowledge retrieval in support scenarios.