Introduction

AI agents embedded in knowledge base platforms have evolved from passive search tools into autonomous actors — retrieving content, surfacing decision trees, drafting responses, and escalating queries — all without a human approving each step. This autonomy delivers real efficiency gains. But without a structured audit framework, organizations cannot trace which agent decision caused a knowledge error, a compliance gap, or a degraded customer experience.

Only 2% of organizations claim full visibility into the AI models they use, according to IBM's 2024 enterprise AI governance study. At the same time, 51% of organizations using AI have experienced at least one negative consequence, with roughly one-third reporting issues tied to AI inaccuracy.

When AI agents operate inside knowledge platforms handling billing disputes, healthcare queries, financial guidance, or telecom troubleshooting, those errors translate directly into business, legal, and reputational damage.

This guide explains how to build an audit-ready AI agent activity framework within knowledge base platforms, covering what to log, how to implement structured auditing, and which compliance standards apply.

TL;DR

- AI agent activity auditing logs every action inside a knowledge base platform — queries, retrievals, responses, and escalations — with identity, authorization, and timestamp context

- Without this visibility, knowledge errors, unauthorized content changes, and compliance breaches cannot be traced to their source

- A complete audit trail links each request to the KB content retrieved, the response produced, and the agent identity that authorized it

- Aggregated audit data surfaces knowledge gaps, poor retrieval patterns, and escalation failures that degrade support quality

- Certifications under GDPR, SOC 2, ISO 27001, and HIPAA ensure audit-ready AI interactions across regulated enterprise contact centers

Why Auditing AI Agent Activity Matters in Knowledge Management

AI agents in KB platforms handle high-stakes customer interactions — billing disputes, healthcare queries, financial guidance, telecom troubleshooting — where incorrect responses or unauthorized actions carry direct business, legal, and reputational consequences.

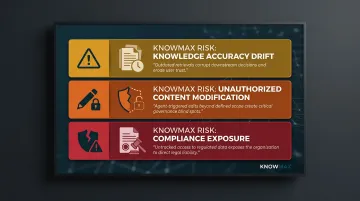

Three specific risk categories make auditing essential:

- Knowledge accuracy drift: When an agent repeatedly retrieves deprecated product specs or superseded policy language, every downstream interaction inherits that error. Without retrieval audit trails, knowledge managers have no way to identify or fix the source.

- Unauthorized content modification: Agents editing or summarizing articles outside their defined scope create governance gaps. AI-generated summaries may omit compliance language; auto-translations can alter meaning in ways that violate regulatory requirements. Audit logs need before/after content states with agent identity to enable rollback.

- Compliance exposure: Agents accessing regulated customer data without a traceable authorization record create direct liability. 48% of executives worry that AI may introduce misinformation into company datasets, per Deloitte's 2026 Human Capital Trends report. In healthcare, banking, and insurance environments where HIPAA, GDPR, and SOC 2 apply, undocumented AI data access is a material compliance violation.

The business cost is substantial. McKinsey research shows that AI implementation has driven a 50% reduction in cost per call for some contact center vendors, with banking AI transformations identifying opportunities to cut 45% of contact center costs. Without audit controls, a single unchecked AI error can reverse those savings — through repeat contacts, regulatory penalties, or both.

What AI Agent Activities Should You Audit in a Knowledge Base?

Five activity categories generate the most actionable audit data. Each maps to a distinct failure mode — from retrieval gaps to unauthorized access — and requires its own logging approach.

Knowledge Retrieval and Search Behavior

Log every query the AI agent initiates inside the KB:

- The search term entered

- The articles retrieved and their ranking order

- Ranking signals applied (intent matching, freshness, usage analytics)

- Whether retrieved content matched customer intent

This record surfaces patterns where agents consistently return low-quality, outdated, or irrelevant knowledge. For example, if an agent repeatedly retrieves Article A for Query X but customers then escalate 70% of those interactions, the audit data flags either a content quality issue or a retrieval accuracy problem.

Content Creation, Editing, and Update Events

Record all agent-triggered content changes:

- AI-generated summaries

- Rephrased articles

- New entry creation

- Auto-translations

Each event must include:

- Agent identity executing the change

- Timestamp of the modification

- Before/after content state

This enables rollback and accountability when knowledge quality degrades after an automated update. If an AI summarization tool consistently removes critical compliance disclaimers, audit logs provide the audit trail to identify and remediate the pattern.

Decision Tree Traversal and Guided Resolution Logs

Track every branch an agent executes during guided issue resolution:

- Each path selected

- Options skipped or bypassed

- The final resolution outcome

Systematic resolution failures rarely announce themselves — they show up in aggregate. When 40% of customers following Path B in a billing dispute tree escalate to a supervisor, audit logs reveal whether the decision tree logic is flawed or whether agents are bypassing required verification steps.

Escalation and Handoff Decisions

Audit every event where an agent decides to escalate a query:

- What triggered the escalation (knowledge gap, policy exception, technical limitation)

- What context was passed downstream to the human agent

- Whether the handoff completed successfully

Escalation patterns directly map to KB coverage gaps. If agents escalate 30% of password reset requests, the audit trail reveals whether the issue stems from missing content, ambiguous instructions, or authorization constraints the agent cannot handle autonomously.

Policy Guardrail and Permission Events

Log every instance where an agent attempted an action outside its authorization scope:

- Accessing restricted content

- Invoking unavailable tools

- Generating a response type it is not permitted to produce

Record whether the guardrail blocked or permitted the action. Fifteen unauthorized PHI access attempts in a single day, for instance, points to either a misconfigured permission model or an active security incident — neither of which surfaces without this log layer in place.

How AI Agent Activity Auditing Works – Step by Step

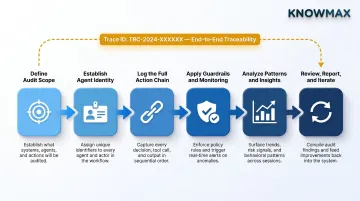

The six stages below follow the complete lifecycle of a robust audit program — from defining what to watch to using audit insights to improve the knowledge base. Skipping any stage creates blind spots that only surface during incidents or compliance reviews.

Step 1 – Define the Audit Scope

Identify which agent workflows within the KB platform require full audit coverage:

- Customer-facing resolution flows

- Internal agent-assist interactions

- Content management automations

Map the applicable compliance obligations for each. Getting scope right prevents both under-logging (coverage gaps) and over-logging (cost and privacy risk). For example, a healthcare knowledge base supporting patient intake requires full HIPAA audit coverage, while an internal IT troubleshooting KB may only need SOC 2 access controls.

Step 2 – Establish Agent Identity and Authorization Context

Assign a unique, non-shared identity to each AI agent or agent workflow so every logged action is attributable to a specific entity. Shared service accounts destroy forensic value: if five different agent workflows share one credential, investigators cannot determine which workflow triggered an incident.

Embed role-scoped authorization context in every log entry:

- What the agent is permitted to access

- Which actions it is authorized to execute

- Which data types it can process

This context enables both real-time guardrail enforcement and post-incident forensic analysis.

Step 3 – Log the Full Action Chain

Capture the complete sequence from trigger to outcome:

- The incoming query or event

- The KB articles or decision trees invoked

- The response generated

- The final resolution status

Each element must carry a trace ID linking it back to the originating request. This enables investigators to reconstruct any session end-to-end. For example, if a customer complains about receiving incorrect billing information, the trace ID connects every step: the customer query, the article retrieved, the response generated, and the agent identity responsible.

Step 4 – Apply Guardrails and Real-Time Monitoring

Instrument guardrail checks to log both permitted and denied actions in real time, then establish behavioral baselines per agent identity to enable anomaly detection:

- Sudden spikes in content edits

- Unusual escalation rates

- Access pattern deviations

These deviations trigger automated alerts before incidents compound. If an agent that typically processes 50 queries per hour suddenly executes 500 content modifications, that anomaly flags potential misconfiguration or compromise.

Step 5 – Analyze Patterns and Surface Insights

Use aggregated audit data to identify systemic issues:

- Knowledge gaps causing repeated escalations

- Content areas with high agent modification rates

- Query categories with consistently poor retrieval accuracy

Done consistently, audit analysis drives real KB improvements — not just compliance checkboxes. For example, if 60% of queries about "account suspension" result in escalations, audit analysis reveals whether the issue stems from missing content, ambiguous language, or decision tree logic failures.

Step 6 – Review, Report, and Iterate

Establish a regular audit review cadence. Audit logs should feed directly into KB governance workflows:

- Content review queues

- Permission updates

- Compliance reporting exports

Revise the audit framework whenever you add new agent capabilities, integrations, or data types. Static audit configurations become compliance blind spots as the platform evolves.

Compliance Standards and Best Practices for AI Agent Audit Logs

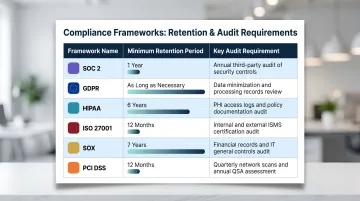

AI agent audit logging requirements map to four major frameworks relevant to enterprise KB platforms:

SOC 2 (Trust Services Criteria) Continuous evidence that access controls operated across the audit period is the core requirement. This covers logging successful and failed login attempts (CC6.1), monitoring system activity for anomalies (CC7.2), and evaluating security events to determine incident status (CC7.3). Industry standard: retain hot logs for 30–90 days, archived logs for one year.

GDPR (Articles 5, 22, 24, 35) Demonstrable purpose limitation and data minimization for agent-processed personal data are non-negotiable. Article 22 mandates that data subjects cannot be subject to decisions based solely on automated processing with legal effects — controllers must provide meaningful information about the logic involved. Audit logs must prove that AI agents operate within defined purposes and that automated decisions include human oversight where required.

HIPAA (45 CFR 164.312(b), 164.308(a)(1)(ii)(D)) Immutable records of every PHI access event an agent triggers are required. Unique user identification (164.312(a)(2)(i)) keeps each agent action traceable, while regular activity review (164.308(a)(1)(ii)(D)) mandates procedures covering audit logs, access reports, and security incident tracking.

Retention period: six years from date of creation or date last in effect, whichever is later.

ISO 27001:2022 (Annex A 8.15) Documented enforcement of access controls across all AI system integrations is required. Organizations must produce, store, and analyze logs covering: user activities, authentication events, system exceptions, faults, and security events. Recommended retention: at least 12 months to demonstrate control effectiveness.

Quick-Reference: Framework Retention Requirements

| Framework | Minimum Retention | Key Audit Requirement |

|---|---|---|

| SOC 2 | 1 year (practical standard) | Access control evidence, anomaly monitoring |

| GDPR | Only as long as necessary | Purpose limitation, automated decision oversight |

| HIPAA | 6 years | Immutable PHI access records, traceable agent actions |

| ISO 27001 | 12 months | Activity logs, authentication events, security exceptions |

| SOX | 7 years | Financial system activity, change records |

| PCI DSS | 12 months (3 months immediately available) | Cardholder data access, system event logs |

Three Non-Negotiable Audit Log Hygiene Practices

1. Write-once storage with cryptographic integrity checks Logs must be append-only to prevent post-incident modification. Cryptographic hashing confirms that no entries were altered or deleted — a requirement auditors verify directly.

2. Set retention periods per framework, not a single blanket policy Retention periods vary significantly — a single default window will leave gaps:

- HIPAA: 6 years

- SOX: 7 years

- SOC 2: 1 year practical standard

- ISO 27001: 12 months minimum

- PCI DSS: 12 months, with 3 months immediately available

- GDPR: Only as long as necessary; must demonstrate compliance

3. Keep logs clean of raw sensitive data Mask PII at ingestion and hash credentials before writing to the log. Reference large payloads by ID rather than full content — this preserves forensic utility while cutting storage overhead and privacy exposure.

Evolving Audit Configurations

Audit logging requires active maintenance. As KB platforms add new AI capabilities — new agent types, expanded channel integrations, broader tool access — audit scope must be reviewed and updated at each release cycle. A configuration that was complete six months ago may now miss entire agent workflows.

The EU AI Act (Article 12) requires that high-risk AI systems technically allow for automatic recording of events over the lifetime of the system, effective August 2026. Logs must include start/end date and time, reference database checked, input data leading to a match, and identification of natural persons involved in verifying results.

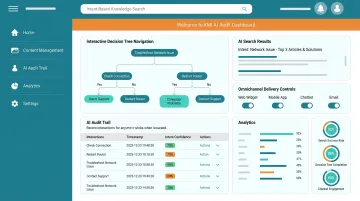

How Knowmax Enables Audit-Ready AI Agent Activity

Knowmax holds GDPR, SOC 2 Type II, ISO 27001, and HIPAA certifications — meaning audit controls are built into the platform architecture, not added on later. Organizations deploying AI agents in regulated environments — healthcare, banking, telecom, insurance — get the governance infrastructure needed for compliance from day one.

Compliance-Embedded Architecture

Knowmax's core AI features generate structured, traceable interaction records:

- Intent-based knowledge search logs every query, the articles retrieved, and ranking signals applied

- Interactive decision tree navigation tracks every branch executed, options skipped, and final resolution outcome

- AI author tools for content creation and summarization support versioned change management

- Omnichannel knowledge delivery maintains consistent audit trails across agent desktop, self-service portal, chatbot, mobile, and voice channels

Enterprise Integration for Unified Audit Visibility

Knowmax integrates with enterprise SIEM and CRM audit pipelines across Salesforce, Zendesk, Freshworks, and Genesys. This means audit data flows directly into existing compliance reporting workflows — no separate AI-specific toolchain required. Compliance teams get a unified view of AI agent activity without rebuilding their reporting stack.

Role-Based Access and Authorization Controls

Knowmax supports role-scoped agent identity and authorization controls. Each AI agent workflow can be assigned a unique, non-shared identity with defined permissions for content access and action execution. Every logged action is attributable to a specific entity. This supports post-incident review and satisfies unique user identification requirements under HIPAA and SOC 2.

Industry-Specific Deployment

Knowmax serves regulated verticals where AI agent auditing is most critical:

- Healthcare: HIPAA-compliant workflows cover patient data handling, agent-guided resolutions, and secure content access

- Banking: SOC 2 and GDPR controls support audit-ready knowledge for KYC checks, fraud dispute resolution, and loan navigation

- Telecom: Guided flows and visual troubleshooting guides maintain GDPR and SOC compliance while reducing AHT and improving FCR

- Insurance: GDPR and HIPAA-aligned workflows trace every step of claims handling and policy guidance

A telecom client using Knowmax achieved a 21% improvement in FCR and handled 73% of transactions through AI chatbots, demonstrating the platform's ability to meet compliance and efficiency needs in highly regulated environments.

To see how Knowmax can be configured for your audit coverage and compliance reporting requirements, connect with the Knowmax team directly.

Frequently Asked Questions

What are the three key steps of a knowledge-based agent?

A knowledge-based agent follows three steps: perceiving and interpreting the input query, querying its knowledge base to retrieve relevant information, and generating a response based on the retrieved knowledge and any defined rules or guardrails.

What are the four different types of audit trails?

The four main types are system audit trails (OS-level events), application audit trails (software-specific actions), user audit trails (individual identity activity), and network audit trails (traffic and access events). AI agent auditing in KB platforms primarily spans the application and user trail types.

What are the 5 components of an AI agent?

The five core components are: perception module (input processing), knowledge base (stored information), reasoning engine (decision logic), action module (output and execution), and learning component (feedback and adaptation). Auditing should cover all five layers for comprehensive accountability.

What are the 7 types of AI agents?

The seven types are: simple reflex agents, model-based reflex agents, goal-based agents, utility-based agents, learning agents, hierarchical agents, and multi-agent systems. AI agents in knowledge base platforms most commonly operate as goal-based or model-based agents.

What is the difference between AI agent observability and auditing in a knowledge base?

Observability tracks system health and performance metrics — latency, retrieval quality, error rates — for operational troubleshooting. Auditing creates an immutable, identity-linked record of every agent action for compliance, accountability, and forensic reconstruction after an incident. Both are essential but serve different purposes.