Introduction

Healthcare support teams face mounting pressure on three converging fronts. Patient query volumes continue climbing while administrative staff burnout has reached 45.6% according to a 2023 analysis covering 43,026 healthcare workers. At the same time, patient expectations for 24/7 digital access have doubled year-over-year, with AI chatbot use for health information jumping from 16% to 32% in just 12 months.

That convergence puts one question squarely on the table: should healthcare organizations deploy agent assist AI — systems that keep humans in the loop — or commit to fully autonomous AI that handles interactions end-to-end?

Unlike retail or telecom, where a chatbot error might mean a delayed package or billing confusion, healthcare carries different stakes entirely. Mistakes in patient-facing support can affect treatment adherence, insurance access, and patient trust in care delivery.

The UnitedHealth nH Predict lawsuit — alleging a 90% error rate in AI-driven coverage denials — shows exactly what happens when autonomous systems malfunction in consequential healthcare decisions.

TL;DR

- Agent assist keeps humans in control while AI surfaces knowledge, drafts responses, and guides resolution in real time

- Fully autonomous AI handles high-volume, low-risk queries at scale—best suited for scheduling, billing questions, and FAQs

- Healthcare faces unique constraints: HIPAA compliance, patient safety implications, and FDA/state oversight of clinical AI

- Neither model is universally superior—the right choice depends on query complexity, risk level, and regulatory requirements

- Start with agent assist, then expand AI autonomy only after validating accuracy on live query data

Agent Assist vs Fully Autonomous AI: Quick Comparison

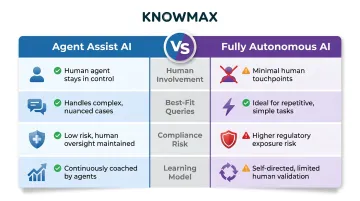

Human Involvement

Agent Assist: Human agent reviews AI-generated suggestions and makes final decisions. AI functions as a co-pilot, retrieving knowledge and suggesting next-best actions while the agent remains in control.

Fully Autonomous AI: AI resolves interactions independently from intake through closure. Humans intervene only for escalations or exceptions.

Best-Fit Query Types

Agent Assist:

- Insurance authorization and benefits interpretation

- Clinical protocol guidance requiring physician oversight

- Billing disputes involving financial liability

- Sensitive patient complaints or compliance issues

Fully Autonomous AI:

- Appointment scheduling and confirmation

- Facility information (hours, locations, parking)

- Portal login assistance and password resets

- Prescription refill status lookups

Compliance Risk in Healthcare

Agent Assist: Lower regulatory exposure because human oversight provides verification before responses are sent. Satisfies HIPAA's minimum necessary standard and FDA's clinical decision support criteria requiring independent clinician review.

Fully Autonomous AI: Higher risk that demands extensive safeguards, including:

- HIPAA Business Associate Agreements

- Real-time PII redaction

- Defined escalation triggers

- State-level AI disclosure compliance (California AB 3030, Colorado SB 24-205)

- Continuous accuracy auditing

Learning and Improvement

Agent Assist: Every human correction feeds back into the system, improving accuracy without full model retraining. Over time, this captures institutional knowledge as agents refine AI suggestions — a compounding advantage that grows with use.

Fully Autonomous AI: Improves through periodic model updates and post-interaction feedback, but lacks the real-time correction signals that come from human-in-the-loop workflows.

What is Agent Assist AI in Healthcare Support?

Agent assist AI—also called human-in-the-loop AI—works alongside healthcare support agents in real time. It surfaces relevant protocols, suggests responses, flags compliance risks, and guides issue resolution while the human retains final decision authority.

Healthcare contact centers using agent assist report reduced average handle time (from the industry baseline of 3-6 minutes), lower error rates on complex queries, and faster agent onboarding. Gartner's 2026 survey found that 85% of service leaders are expanding agent responsibilities even as AI reduces contact volume—meaning the industry is investing in augmented agents, not replacing them.

That investment makes sense when you look at how agent assist works in practice. It uses guided decision trees, AI-powered knowledge search, and real-time response suggestions to help agents navigate complex healthcare scenarios. An agent handling a prior authorization query, for instance, receives step-by-step protocol guidance without toggling between systems—a capability Knowmax delivers through HIPAA-compliant workflows, role-based access control, and native EHR and CRM integrations.

There's also a compounding accuracy benefit. Every time an agent corrects an AI suggestion or edits a response, that correction becomes a training signal. The system improves without expensive model retraining—which matters when clinical protocols and insurance policies can change on short notice.

Use Cases of Agent Assist in Healthcare Support

Agent assist delivers the highest value in scenarios requiring nuance, empathy, or regulatory precision:

- Prior authorization inquiries – guides agents through multi-step approval workflows with plan-specific requirements

- Coverage and benefits questions where policy language is ambiguous or eligibility criteria vary by plan

- Billing disputes involving multiple payers, providers, or coordination-of-benefits situations

- Medication refill coordination, where agents verify prescriptions and flag potential interaction concerns before routing to clinical staff

- Patient complaint resolution – sensitive cases where tone, empathy, and accuracy all carry weight

Agent assist is the default-safe choice for regulatory compliance. HIPAA's minimum necessary standard, FDA regulations requiring physician oversight for clinical guidance, and audit trail obligations all favor human-in-the-loop models. With a human verifying each response, organizations create a natural compliance checkpoint that fully autonomous systems have to engineer around—often at greater cost and risk.

What is Fully Autonomous AI in Healthcare Support?

Fully autonomous AI manages entire support interactions from intake to resolution without human involvement. Using natural language processing, knowledge retrieval, and decision logic, these systems respond to queries, route requests, or close tickets independently.

The operational case is clear: 24/7 availability without staffing costs, instant response times at scale, and consistent handling of high-volume repetitive queries. McKinsey's 2019 analysis found that automation could drive up to 30% cost savings for healthcare payers, with at least 30% of activities in roughly 60% of occupations potentially automatable.

Healthcare-specific risks, however, are hard to ignore. Autonomous AI can produce incorrect information about insurance coverage, medication interactions, or appointment protocols. Unlike retail, where a wrong product recommendation causes minor inconvenience, healthcare errors affect patient outcomes directly. The UnitedHealth lawsuit — alleging the nH Predict AI system denied elderly patients medically necessary care with a 90% error rate — illustrates both the litigation exposure and reputational damage when autonomous systems malfunction in consequential decisions.

Regulatory scrutiny is intensifying at both federal and state levels. The FDA's January 2026 Clinical Decision Support guidance clarifies that patient-facing AI tools resembling treatment recommendations may trigger medical device review requirements.

State-level rules are adding further pressure. California's AB 3030 requires GenAI disclosure in clinical communications, while Colorado's SB 24-205 mandates impact assessments and human review rights for high-risk AI in healthcare.

Use Cases of Fully Autonomous AI in Healthcare Support

Autonomous AI is reasonably safe and effective for specific, well-bounded query types:

- Scheduling, confirming, or rescheduling appointments and sending reminders

- Answering general FAQs about office hours, locations, parking, and visiting policies

- Resolving portal login issues — password resets and account access

- Checking prescription refill status without requiring clinical interpretation

- Confirming active coverage status from structured eligibility data

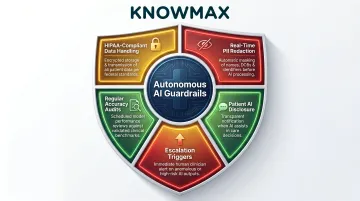

Before deploying autonomous AI in healthcare, organizations must implement clearly defined guardrails:

- HIPAA-compliant data handling with executed Business Associate Agreements

- Real-time PII redaction to prevent unauthorized data exposure

- Defined escalation triggers that route complex or high-risk queries to humans

- Regular accuracy audits with documented performance thresholds

- Clear disclosure to patients that they are interacting with AI (required in California and recommended by CMS)

Agent Assist vs Fully Autonomous AI: Which Fits Healthcare Better?

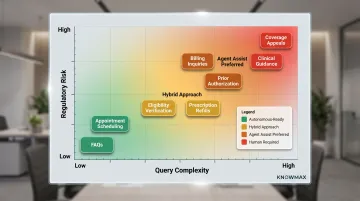

The right model should be determined by two axes: query complexity and error consequence. High complexity or high consequence = agent assist. Low complexity and low consequence = consider autonomous.

The healthcare regulatory environment structurally favors agent assist for most non-trivial interactions. HIPAA mandates for data handling, FDA requirements for physician oversight on clinical guidance, and GDPR Article 22's prohibition on solely automated decisions affecting health data all create compliance overhead for fully autonomous deployments.

GDPR explicitly classifies health data as a "special category" — requiring explicit consent and human review rights for any automated decision that affects it.

CMS's 2025 guidance reinforces this direction, mandating human oversight before AI systems are used for business decisions and requiring clear disclosure whenever AI is used in stakeholder-facing content. These constraints collectively shape which query types can realistically move toward automation — and which ones cannot.

When to Choose Agent Assist

Agent assist is the right choice when:

- Queries involve insurance coverage interpretation where errors create financial liability

- Clinical protocol guidance is required, triggering FDA oversight and physician review norms

- Sensitive patient situations demand empathy, judgment, or conflict resolution

- Any interaction could affect treatment decisions or access to care

Agent assist also reduces agent burnout and shortens onboarding time by surfacing accurate, real-time guidance at the point of need. For healthcare organizations facing high staff turnover, that efficiency compounds quickly.

Platforms like Knowmax support these workflows through HIPAA-compliant knowledge delivery, role-based access control, and integrations into Salesforce, Zendesk, Genesys, and EHR systems — keeping agents accurate without slowing them down.

When to Consider Fully Autonomous AI

Limited autonomous AI deployment makes sense for:

- Factual Tier-1 queries with no interpretation required (hours, locations, basic eligibility status)

- Post-interaction surveys collected after a human agent closes the case

- Appointment confirmations — simple yes/no responses with minimal downstream impact

- Self-service portal tasks: document downloads, form submissions, account navigation

Start with a graduated approach: Begin with agent assist to build an accuracy baseline. Identify query types where autonomous handling is consistently accurate and carries low regulatory risk. Expand automation only to those specific flows — this limits exposure to compliance or patient safety incidents during early deployment.

| Query Type | Automation Suitability | Regulatory Consideration |

|---|---|---|

| Appointment scheduling/reminders | Higher | Exempt from CA AB 3030 (administrative); low FDA risk |

| FAQs (hours, location, parking) | Higher | No PHI involved; minimal regulatory exposure |

| Eligibility/benefits verification | Moderate | Requires BAA; PHI access; minimum necessary standard applies |

| Prescription refill requests | Moderate | May trigger FDA CDS consideration if guidance is provided |

| Billing inquiries | Moderate | PHI involved; potential for consequential financial decisions |

| Prior authorization status | Lower | Colorado HB 26-1139 prohibits AI-only coverage decisions |

| Coverage appeals/disputes | Low | UnitedHealth litigation illustrates risk; GDPR Art 22 mandates human review |

| Clinical symptom guidance | Not suitable | FDA classifies patient-facing clinical tools as potential medical devices |

Conclusion

Healthcare support leaders should adopt a practical decision posture: agent assist should be the default starting point for most organizations. It delivers meaningful efficiency gains—faster resolutions, lower handle times, better agent accuracy—while keeping humans accountable for outcomes in a high-stakes environment.

Full autonomy is not off the table, but it should be earned through evidence, not assumed from day one. Organizations that start with agent assist, invest in **accurate and compliant knowledge management**, and track outcomes carefully are the ones that expand autonomous capabilities safely—not those that move fast and assume the AI will handle edge cases.

The choice between agent assist and autonomous AI is not a one-time decision. As your organization collects data on query types, error rates, and compliance exposure, you can identify where autonomy is genuinely safe and where human oversight remains essential.

Frequently Asked Questions

What is the difference between agent assist and fully autonomous agents in healthcare support?

Agent assist keeps a human agent in the decision loop, with AI providing real-time knowledge, suggestions, and workflow guidance. Fully autonomous AI resolves interactions end-to-end without human involvement. This distinction matters more in healthcare because patient safety and regulatory compliance requirements favor human verification for consequential decisions.

What are the main types of agent programs and agents used in healthcare support?

Three primary categories cover most healthcare support deployments: agent assist tools (AI copilots, guided decision trees, knowledge management platforms), autonomous chatbots for tier-1 queries like scheduling and FAQs, and hybrid systems that route interactions between autonomous and human-assisted handling based on complexity and risk.

Is fully autonomous AI safe for healthcare customer support interactions?

Autonomous AI can be safe for low-risk query types like appointment scheduling and general FAQs. Deployment in patient-facing workflows requires:

- HIPAA compliance and Business Associate Agreements

- Real-time PII redaction and defined escalation triggers

- Regular accuracy audits and patient disclosure

What compliance certifications should I look for in healthcare AI support tools?

HIPAA is the baseline for any patient data handling. SOC 2 Type II validates enterprise security controls over time, while ISO 27001 covers the broader information security management system. GDPR compliance is also required for organizations handling EU patient data. Knowmax carries all four certifications.

When should healthcare support teams use agent assist vs autonomous AI?

Use agent assist for complex, sensitive, or high-consequence queries like insurance authorizations, billing disputes, and clinical guidance. Consider limited autonomous AI for high-volume, rule-based, low-risk interactions where errors are easily correctable—scheduling confirmations, office hours FAQs, and portal login assistance.

How does agent assist AI improve first call resolution in healthcare support?

Agent assist improves FCR by surfacing the right protocol, policy, or knowledge at the moment the agent needs it. Agents stop relying on callbacks or escalations to fill information gaps — handle time drops while human judgment stays in place for complex healthcare scenarios.